You need to get your head around the concept of aliasing.

Nyquist theory states that the highest frequency a digital sampling system can represent will always be half the samplerate, so this upper limit is known as the Nyquist frequency. The problem is, if you try to represent signals higher than nyquist they don't just disappear: they actually end up reflected back down below nyquist where they become a particular type of distortion known as aliasing.

Aliasing is the reason why your AD converters need filters: everything above nyquist must be removed before conversion, as there is no way to separate aliasing from the wanted signal after it has occurred.

Aliasing can also occur when processing digital signals however: any process that adds extra harmonics risks inadvertantly adding components above nyquist.

As an example, lets take a sine wave at 6KHz, and lets simplify the maths by setting a samplerate of exactly 40KHz, so nyquist is exactly 20KHz. I'm going to saturate the sine wave with a distortion effect to generate extra harmonics, which should appear at the following frequencies:

2nd 3rd 4th 5th 6th

12K 18K 24K 30K 36K

However, components above nyquist would be reflected back down as aliasing, so we would actually get the following (aliasing in red)

2nd 3rd 4th 5th 6th

12K 18K 16K 10K 4K

If we oversample by 2x nyquist will now be 40KHz, so we can go much further:

2nd 3rd 4th 5th 6th 7th 8th 9th 10th

12K 18K 24K 30K 36K 38K 32K 26K 20K

Notice that not only do we get another octave of headroom before aliasing occurs, we also get another octave above that where the resulting aliasing components are higher than the target nyquist, and will then be filtered out when downsampling back to 40KHz. ie: the orange aliasing components at 38K, 32K, and 26K will not be audible, so unlike the 1x version which aliases audibly at the 4th harmonic, the 2x version can go up to the 10th harmonic before aliasing becomes a problem.

The amount of oversampling you need therefore depends on two things: how much harmonic distortion is generated by the process, and the higest frequencies present in the signal you are processing.

Compressors will add subtle harmonics, especially with fast attack or release times, but if you are processing a bass guitar (for example) the harmonics generated will probably not go high enough to cause aliasing. But if you are compressing a drum overhead with lots of HF cymbal crashes you may find you need 2x or 4x oversampling to avoid a brittle harsh quality creeping in from the aliasing distortion.

If you are distorting the drum overheads however, perhaps with a saturation effect, or with a compressor or EQ that models analog non-linearities, you may well need 8x oversampling or higher. (The latest version of https://cytomic.com…"]The Glue[/]="https://cytomic.com…"]The Glue[/] provides an offline rendering mode with 256x oversampling! There is no way the compression would ever require this much oversampling, but the "Peak Clip" option uses clipping rather than limiting, ie: distortion.)

Obviously, the down side to oversampling is extra cpu load, and extra latency: not only do you have to process twice as many samples per second (or 4 times, or 8 times or whatever) you also need up and down sampling filters. If these filters are simplified too far they can significantly colour the sound, or smear the phase at the high-end , but 'perfect' linear phase fiiltering is costly in terms of cpu, and will add a significant amount of latency. Some plugs therefore allow you to specify that they run at samplerate during realtime playback, but oversample when rendering (Auto mode in the Voxengo plugs). The Glue even allows you to specify different oversampling amounts for playback and rendering, eg: 2x for playback but 8 (or 256!) x for rendering.

I'm a little wary of that to be honest: when I render I like to get exactly what I was hearing when I mixed, and I don't like to assume that the host will always realise that the latency has changed. (Reaper seems to cope ok to be honest, but why introduce an extra variable?)

I seem to be in heavy work avoidance mode today, so I've made so

I seem to be in heavy work avoidance mode today, so I've made some examples (I've been meaning to test the new extreme oversampling options in The Glue anyway)

aliasing examples

Warning: turn down your monitors! The files peak at about -20dBFS, but this will still be painful at high volume!

I started by generating a 5 second sine wave sweep from 1KHz to 10KHz, at 44.1KHz. I then ran this through The Glue, with the Peak Clip option turned on, and the makeup gain maxed at +40dB. In other words, distorting the sine wave almost into a square wave. Then I dropped the channel fader to -20dB to leave some headroom in the renders.

I rendered 4 files:

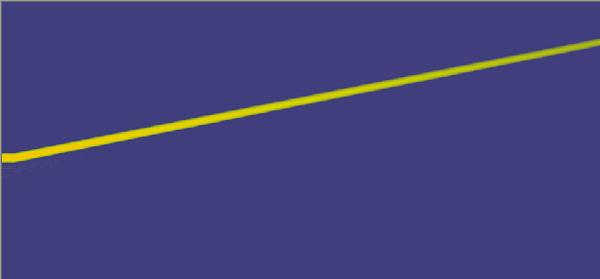

1: Bypass. The control, with The Glue bypassed. This is just a pure sine sweep as expected, and a wavelet transform looks like this:

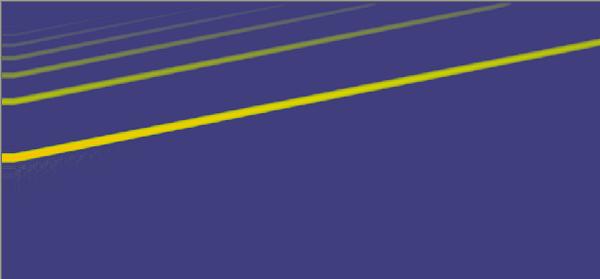

2: Distorted x1. No oversampling. Sounds awful, and looks a mess as well:

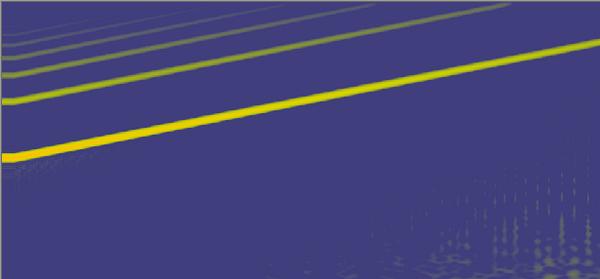

3: Distorted x8. 8x oversampling: sounds pretty good, but (in this extreme case) some chirpy nasties start to creep in towards the end:

4: Distorted x256. The maximum 256x oversampling, which is only available for offline renders. This is essentially perfect: all the harmonics up to nyquist, and nothing else. However, note that this 5 sec file took approx 50 secs to render, while the rest took basically no time at all.

Attached files